Hello everyone,

We are going to start one series of posts where we are going to show the AWS CloudFormation usage.

The nexts post going to abord a creation one RDS, DMS using AWS cloudFormation stack. In this series we’re going to showcase AWS CloudFormation by creating one RDS and one DMS.

First one fast introduction about AWS cloudFormation.

Let start to explain AWS CloudFormation

” AWS CloudFormation provides a common language for you to model and provision AWS and third party application resources in your cloud environment.”

In practice it’s a json/yaml file, where we can describe instructions to create AWS services.

Let’s code!.

First step that we need is connect to AWS Console. In the search field type CloudFormation like the picture below.

Click on CloudFormation to open the service console.

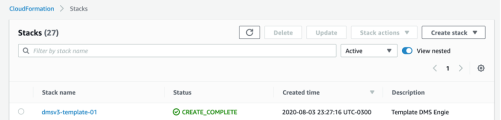

Click on Stacks.

After this, click on create a new stack and select “with new resources”

We are going to click on Create template in designer and you will be redirected to page like below.

Click on Template and the code editor is going to open.

The next step will be to create one script to deploy a service. In this example, we going to use a DMS script and a RDS Postgresql. The examples used in this article are available in the next article.

To execute the script the first step is to validate by clicking the highlighted button in the below image.

The return can be OK or error. If the return is OK you can create the stack. To do this clicking the highlighted button in the below image.

You can check execution events by clicking in the Events page. The return is similar to the image below.

In the next articles, we are going see the source code and to use the AWS DMS Service to replicate data from Oracle Database to RDS PostgreSQL.