We all know the SAR (System Activity Report), however sometimes it’s dificult to visualize a large amount of data or even extract some long term meaningful information.

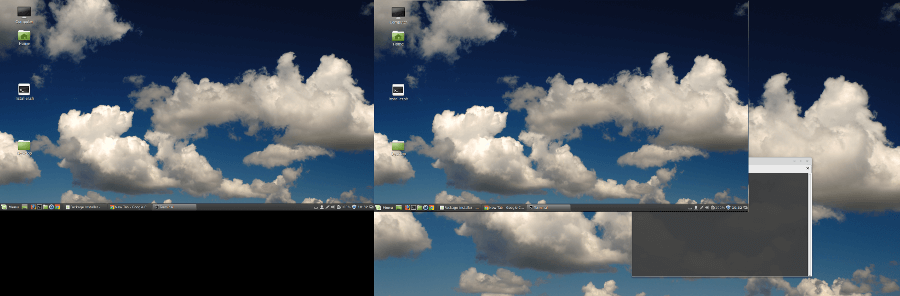

How wonderful would be to have a graphical visualization from this data? Well, it’s pretty simple using KSAR.

KSAR is a BSD licensed Java based application to create graph of all parameters from the data collected by Unix sar utilities and can be exported to PDF, JPG, PNG, CSV, TXT and others.

The project Codes are here. The latest Version is KSar2-0.0.4.

See below an I/O Graph from month of Dec, generated from a database server, as an example:

To use it, first thing is to have SAR data. To get it we have basically 3 options:

A. Collect from current server.

B. Extract from other server using direct SSH connection.

C. Use a Generated SAR File

D. Run Java tool from Client Server.

Personally, I prefer to use option C, in order to avoid putting any code in client servers and also work in less intrusive mode as possible.

I also don’t use option B because we don’t usually have direct connection to client server, but sometimes with jumpboxes or similar.

There is a third reason: When Chosing option A or B, it’s automatically connected only daily data, but when using C, you can put all data you need. It need only to be available on server.

For reference regarding Option D, please check this link.

By the way, some other useful information about SAR:

1. SAR Collection Jobs can be checked on /etc/cron.d/sysstat

2. SAR Retention can be checked/adjusted on /etc/sysconfig/sysstat

Ok, now how to generate the SAR Files?

Using command: sar -A

Example:

[root@grepora-srvr ~]# cd /var/log/sa/

[root@grepora-srvr sa]# ls -lrt |tail -10

total 207080

-rw-r--r-- 1 root root 3337236 Dec 24 23:50 sa24

-rw-r--r-- 1 root root 3756100 Dec 24 23:53 sar24

-rw-r--r-- 1 root root 3337236 Dec 25 23:50 sa25

-rw-r--r-- 1 root root 3756113 Dec 25 23:53 sar25

-rw-r--r-- 1 root root 3337236 Dec 26 23:50 sa26

-rw-r--r-- 1 root root 3756104 Dec 26 23:53 sar26

-rw-r--r-- 1 root root 3337236 Dec 27 23:50 sa27

-rw-r--r-- 1 root root 3756096 Dec 27 23:53 sar27

-rw-r--r-- 1 root root 3337236 Dec 28 23:50 sa28

-rw-r--r-- 1 root root 3756100 Dec 28 23:53 sar28

-rw-r--r-- 1 root root 2317668 Dec 29 16:30 sa29

[root@grepora-srvr sa]# sar -A -f sa29 > sa29.txt

[root@grepora-srvr sa]# cat sa29.txt |head -10

Linux 3.8.13-118.4.2.el6uek.x86_64 (grepora-srvr) 12/29/2017 _x86_64_ (40 CPU)

12:00:01 AM CPU %usr %nice %sys %iowait %steal %irq %soft %guest %idle

12:10:01 AM all 97.74 0.00 1.71 0.01 0.00 0.00 0.52 0.00 0.02

12:10:01 AM 0 96.46 0.00 2.59 0.02 0.00 0.00 0.92 0.00 0.01

12:10:01 AM 1 98.55 0.00 1.24 0.01 0.00 0.00 0.20 0.00 0.00

12:10:01 AM 2 97.83 0.00 2.04 0.01 0.00 0.00 0.11 0.00 0.02

12:10:01 AM 3 98.44 0.00 1.41 0.01 0.00 0.00 0.14 0.00 0.01

12:10:01 AM 4 98.28 0.00 1.65 0.00 0.00 0.00 0.06 0.00 0.01

12:10:01 AM 5 98.27 0.00 1.70 0.00 0.00 0.00 0.02 0.00 0.00

[root@grepora-srvr sa]#

With this file you can copy it from client server your server and import using KSAR Interface. It’s pretty intuitive and easy to use.

But how to generate all available days or a set of specific days in past?

Here is a script I use for this:

### All Days of SAR

DT=$(ls /var/log/sa/sa[0-9][0-9] | tr '\n' ' ' | sed 's/\/var\/log\/sa\/sa/ /g')

## Explicit Days

#DT="07 08 09"

#DT="12"

# Today

#DT=`date +"%d"`

>/tmp/sar-$(hostname)-multiple.txt

for i in $DT; do

LC_ALL=C sar -A -f /var/log/sa/sa$i >> /tmp/sar-$(hostname)-multiple.txt

done

ls -l /tmp/sar-$(hostname)-multiple.txt

After this you can copy the generated file to you PC and generate the same report.

Hope you enjoy it!

Cheers!

Matheus.